Conversation

This replaces the option to exclude the microphone inputs from the master output. That was sufficient for broadcasting users, but there was no way to have a master output with the mics and a booth monitor signal without the mics for live use. Previously that required plugging a mic into an external hardware mixer, but now it is possible to do with Mixxx and any sound card with enough output channels.

| &crossfaderLeftGain, &crossfaderRightGain); | ||

|

|

||

| // All other channels should be adjusted by ducking gain. | ||

| // The talkover channels are mixed in later |

There was a problem hiding this comment.

doesn't this comment apply anymore?

There was a problem hiding this comment.

I'll add the comment back.

| m_pMaster, m_pTalkoverDucking->getGain(iBufferSize / 2), | ||

| m_pTalkover, 1.0, | ||

| iBufferSize); | ||

| SampleUtil::copy(m_pBooth, m_pMaster, iBufferSize); |

There was a problem hiding this comment.

we can get rid of this copy loop, if we redirect booth to master. It would also be nice, if we are able to skip both processing in case it is not used.

| m_pOutputBusBuffers[EngineChannel::RIGHT], 1.0, | ||

| // Mix the talkover mix with the master output, but exclude it from | ||

| // the booth output. | ||

| SampleUtil::copy(m_pBooth, m_pMaster, iBufferSize); |

There was a problem hiding this comment.

you schould swap the buffer creation order. I think the both buffet content is always rewired, so this can be created first. Maybe you could give the buffets a more technical, content related name and let the output buffet pointer point to the configured content.

There was a problem hiding this comment.

I don't understand. Swapping the buffer creation order would always include the talkover mix in the booth output.

| } | ||

| // Just copy Master to Sidechain since we have already added | ||

| // Talkover above | ||

| SampleUtil::copy(*m_ppSidechain, m_pMaster, iBufferSize); |

| m_pOutputBusBuffers[EngineChannel::RIGHT], 1.0, | ||

| // Mix the talkover mix with the master output, but exclude it from | ||

| // the booth output. | ||

| SampleUtil::copy(m_pBooth, m_pMaster, iBufferSize); |

There was a problem hiding this comment.

an other null copy.

I think the ducking gain schould be added to the both output. otherwise it is quite loud in the booth during an announcement.

|

I think the headphone mix schould now use booth instead of master. |

|

And we need to reconsider the place for the delay compensation. |

|

I did not mean to change the buffer contents. I mean, create first a buffer

with the master mix an without mic. Let's call it talkoververFreeMixx, than

create an other buffer by adding talkoververFreeMixx: talkoververMixx.

Now you can let pMaster point to the. TalkoverMixx and pBooth to the

talkoververFreeMixx. The sidechain can also point to TalkoverMixx. And

there Headphone mix can also just point to talkoververFreeMixx = booth.

If we configure that booth includes talkoverver. We can skip the creation

of talkoververFreeMixx.

Am 08.06.2017 3:16 nachm. schrieb "Be" <notifications@github.com>:

*@Be-ing* commented on this pull request.

------------------------------

In src/engine/enginemaster.cpp

<#1279 (comment)>:

} else {

- SampleUtil::copy3WithGain(m_pMaster,

- m_pOutputBusBuffers[EngineChannel::LEFT], 1.0,

- m_pOutputBusBuffers[EngineChannel::CENTER], 1.0,

- m_pOutputBusBuffers[EngineChannel::RIGHT], 1.0,

+ // Mix the talkover mix with the master output, but exclude it from

+ // the booth output.

+ SampleUtil::copy(m_pBooth, m_pMaster, iBufferSize);

I don't understand. Swapping the buffer creation order would always include

the talkover mix in the booth output.

—

You are receiving this because you commented.

Reply to this email directly, view it on GitHub

<#1279 (comment)>, or mute

the thread

<https://github.com/notifications/unsubscribe-auth/ABsfItNOaU1qTKvaCbzXSEjXVgyf4HU2ks5sB_QrgaJpZM4Nzj5Y>

.

|

plus various optimizations and code cleanup

|

I have added a booth gain knob. Initially I did this because it may be more convenient to adjust that with a mouse or DJ controller while DJing than the sound card's output level or amplifier of the booth speakers. I have added a |

Selectable between 3 modes: * Mics routed to master * Mics routed to master & booth * Direct monitoring; mics not mixed in software TODO: latency compensation for auxiliary inputs

|

I have implemented input latency compensation for microphone inputs. Now there are 3 options for how to mix the microphones:

When mixing the mics in software with the master only or master + booth options, the master & booth signals are delayed. The default delay is equal to Mixxx's processing latency, which comes close to being on time. However, the real round trip latency needs to be measured with a physical cable and a program like jack_iodelay then entered into the preferences. When properly set up, it is much easier for a live musician to stay on beat. If the live musician is on beat with what they are hearing out the speakers, when their mic input is mixed with the master and/or booth outputs, it will be on beat, albeit delayed. Previously, without any latency compensation, if the performer was on beat with what they were hearing, the master/booth output would be off beat, which is very disorienting and makes it pretty much impractical to perform. When set to the direct monitoring mode, the mics are not mixed with Mixxx's master or booth outputs. The master and booth outputs are not delayed to avoid adding unnecessary latency. What is different now is that the recording/broadcasting signal is delayed, so if the mics are being directly monitored (mixed in hardware with the master/booth output), the signal that is recorded & broadcasted is the same signal that is heard through the speakers. If the user does not want to hear the mics in the speakers at all, they can use this mode without direct monitoring to avoid adding the extra latency introduced by using the master only mode and listening to the booth output. The headphone output is delayed in the same way as the master output. If no microphone inputs are configured, the master, booth, and headphone outputs are not delayed. Test procedure:

To test the direct monitor mode, select that mode in the Sound Hardware Preferences, enable direct monitoring on your sound card, start recording, then repeat the above steps, stop recording, then listen to the recording. If you were making sound into the mic exactly on beat, the recording should be exactly on beat. @Palakis would you like to test this? I have not yet implemented latency compensation for auxiliary inputs. |

Spare users the math so they can just copy the round trip time measured by jack_iodelay or a similar program.

|

What are the use cases for the master delay? I am thinking of replacing it with a booth delay option. This could be useful to align the timings the DJ hears the master and booth outputs. For example, the master output may be connected to a PA system with a loud subwoofer that is in front of the stage and distant from the DJ. If the booth speakers are closer to the DJ, they will be off beat without adding a delay. Perhaps the booth delay could be automatically added to the headphone delay if the booth output is enabled. |

| EngineChannel(const ChannelHandleAndGroup& handle_group, | ||

| ChannelOrientation defaultOrientation = CENTER); | ||

| ChannelOrientation defaultOrientation = CENTER, | ||

| bool isInput = false); |

There was a problem hiding this comment.

bool isTalkoverChannel = false

There was a problem hiding this comment.

I was ambivalent about naming this because I was thinking that auxiliary inputs should also have latency compensation. But now I am questioning that because auxiliary inputs may be used for connecting external software. It is not clear -- both for users and developers -- why we have two different types of inputs with different capabilities and it is a burden to maintain them both.

There was a problem hiding this comment.

It is a technical requirement to distinguish them, because one goes to the talkover mix, which is added to the master at the very last and the other is treated as a normal deck input.

Only if you mix in the signal to master only and not to booth and headphones the latency compensation can work.

There was a problem hiding this comment.

I do not understand why those distinctions are necessary. But let's keep this PR focused on the new changes. We can discuss the possibility of consolidating the mic and aux inputs after merging this.

There was a problem hiding this comment.

Ok, just change this to

bool isTalkoverChannel = false

like you did in the cpp file and I am fine.

| // outputs, delay the master, booth, and headphones outputs by the input latency. | ||

| // This way, if the microphone user is on beat with the output they hear coming from | ||

| // the speakers/headphones, the input will remain on beat at the expense of | ||

| // adding some latency. |

There was a problem hiding this comment.

I do not understand that.

We have to do with two time domains.

- The time domain in the booth. To this belongs the voice, the headphones and the booth output.

- The time domain of the master mix, inside the engine.

To mix the voice without delay to the booth signal, we need to delay the master by the same amount then the voice = input latency. This works only, if the user does not actually hear the master.

There was a problem hiding this comment.

Latency compensation is intentionally independent from the master, headphone, and not-yet-implemented booth delay. The use cases for those are to adjust for the placement of speakers, which is a separate issue from keeping microphone inputs aligned with the master mix.

There was a problem hiding this comment.

Yes, but it is in the same place in the signal flow like the master delay.

So tweaking for one will destroy the other.

IMHO one master delay stage is sufficient.

There was a problem hiding this comment.

It is not in the same place now that we have a separate booth output that does not have the master delay applied to it.

There was a problem hiding this comment.

The booth output needs its own delay. If we have that one delay stage should be sufficient.

Or what will be the use case for two chained delay in the master path?

There was a problem hiding this comment.

The talkover mix containing the natural input delay has to be added to the mix engine output artificially delayed by the same amount. Currently you apply the artificially delay to both. So finally the talkover mix is delayed twice.

There was a problem hiding this comment.

You have misread or misunderstood the code. This is what I have implemented already. The latency compensation delay is not applied to the master or booth outputs when the direct monitor mode is configured. It is only applied to the record/broadcast signal before the talkover signal is mixed into it.

There was a problem hiding this comment.

I do not see the "before", I see the delay and the then the write to the sidechain.

All this confusion is a clear sight that a refactoring is required. It is hard to track as all the mode dependencies thought so many screens in a single function. Did you consider my proposal to work with these internal buffers first?

There was a problem hiding this comment.

I do not see the "before", I see the delay and the then the write to the sidechain.

What do you mean by not seeing the "before"? That you cannot see the entire signal path for every mode in one place? That is why I have added comments. Refactoring so the TalkoverMixMode is only checked in one place would increase code duplication, for example needing to process the master effects in every condition. I do not think using pointers to differently named internal buffers would help either. For example, if m_pMaster was a pointer to m_pMixerOut for example, it would be confusing to apply the master gain and balance to m_pMaster. Everything is at its place in the signal chain for specific reasons.

There was a problem hiding this comment.

I have to have a close look to your latest changes. I assume the "before" issue is fixed now.

But unfortunately the code is still cluttered an hard to undersand. One function over multiple screen pages, and reused buffer names for different signal null buffer copies and three modes in one control flow. We hardly need to improve this before merge.

| // If the input signal is being directly monitored (mixed with the master output | ||

| // in hardware), do not delay the master output. The recording/broadcasting signal | ||

| // will be delayed in this case to keep the recorded/broadcasted signal on beat | ||

| // and consistent with what the user hears out the speakers/headphones. |

There was a problem hiding this comment.

I do not understand, the user does not hear the recorded or broadcasted singnal why is there a reason for a delay?

There was a problem hiding this comment.

The user hears the recorded/broadcasted signal out the speakers because the mics are mixed in hardware. Without delaying the record/broadcast signal, a different signal is recorded/broadcasted compared to what is actually heard in the speakers.

There was a problem hiding this comment.

We currently cannot record a signal which is mixed in an external hardware.

It looks like I miss something. Could you explain the signal flow step by step?

There was a problem hiding this comment.

With the direct monitor option, the user would configure their sound card to mix the microphone with the main output in hardware, configure the mic input in Mixxx, and press the talk button on the mic input. In this case, Mixxx does not mix the mic input with the master output because that is already done in hardware, but it is necessary to mix the mic input into the record/broadcast signal.

There was a problem hiding this comment.

As explained below, in this case the user has to mix booth into the hardware mixer and mute master, which has always the talkover mix applied.

| @@ -97,6 +100,13 @@ EngineMaster::EngineMaster(UserSettingsPointer pConfig, | |||

|

|

|||

| m_pMasterDelay = new EngineDelay(group, ConfigKey(group, "delay")); | |||

| m_pHeadDelay = new EngineDelay(group, ConfigKey(group, "headDelay")); | |||

There was a problem hiding this comment.

Use cases:

- m_pHeadDelay: configure a headphone delay if the dance floor is in a distance to hear the main PA in sync with the master headphone mixxx. You can now beatmatch by ear, without hearing a double base from master plus mastermix in headphones. (Alternative you can use closed earphones)

- m_pMasterDelay: The master delay can be used if you have a fast master sound card and a slow headphone sound card.

- this can also be used to configure the input delay in case the master is not hearable for the user.

I this sense, we miss the booth delay to time this new channel matching to the others.

IMHO we can consider if we need an extra inputDelayCompensations stage or if we can just use the master delay.

We have also the issue, that this input delay idea only works for a talkover channel, which is not part of the master mix, so we should probably rename it to talkoverDelayCompensation.

A remaining issue is the turntable, (time coded or path through). We cannot do much here, other than reduce Mixxx audio buffer size, and remove all headphone delays and use closed heaphone types, to not get confused by the sound of the main amp.

There was a problem hiding this comment.

I am unsure what is required for timecode if anything. I suspect it is as difficult to scratch on beat without input latency compensation as it is difficult to count into a microphone on beat without latency compensation. I would think that the deck's buffer would need to have the input latency compensation applied before applying the manipulation from timecode. I have not looked too closely at the timecode processing code, but from a quick look through EngineBuffer::process it looks like this would require a lot of complicated refactoring to implement. Let's leave that for another PR.

There was a problem hiding this comment.

Any additional latency in a time code set-up will make the things worth. The user monitors the result by headphones and what he does should be almost instantly heard. If he hears no double beats in his headphones, there should be non on the dance-floor. This is by the way no difference if he users a turntable or a midi controller to cue the track in.

There was a problem hiding this comment.

Like latency compensation for mics, the scratching processing would not be delayed. The output from deck processing without timecode processing would be delayed, then timecode processing would be applied. This way, if the user scratches on beat, the scratch would be heard on beat.

Implementing this would complicate the application of the latency compensation delay in EngineMaster::process...

There was a problem hiding this comment.

(Alternative you can use closed earphones)

Closed back headphones do not prevent feeling bass frequencies from loud subwoofers.

The master delay can be used if you have a fast master sound card and a slow headphone sound card.

Is this a hypothetical use case or have you actually used a combination of sound cards with which you could perceive a difference in the output latency between them? If so, what were those sound cards? I'd imagine that in most cases the difference between output latencies between sound cards running at the same sample rate and buffer size would be very small.

There was a problem hiding this comment.

This way, if the user scratches on beat, the scratch would be heard on beat.

How can the user scratch on beat without listening to the results on headphone?

We can assume that the user listens to the master mix on headphone expects that if he does not hear double beats, the crowd on the does not hear it as well.

Closed back headphones do not prevent feeling bass frequencies from loud subwoofers.

Yes, but if you hear a clear kick base in your closed headphones and the outside is damped by a reasonable amount, you schould be able to distinguish it. That is the reason for using closed headphones.

I'd imagine that in most cases the difference between output latencies between sound cards running at the same sample rate and buffer size would be very small.

In general this is correct, but not if you try Bluetooth headphones. It is not an important use case, but Mixxx 2.0 support it.

There was a problem hiding this comment.

How can the user scratch on beat without listening to the results on headphone?

We can assume that the user listens to the master mix on headphone expects that if he does not hear double beats, the crowd on the does not hear it as well.

Whether the user is listening to the scratched signal in headphones, booth, or master output is irrelevant. Again, you are confusing the total output delays with the input latency compensation delays. They are applied at different parts of the signal chains and have separate use cases. The general signal path is:

channel processing --> input latency compensation --> output delay --> buffer sent to sound card

/

mic input ----

The input latency compensation delays are only for aligning Mixxx's output with live input signals. The output delays are for aligning what the DJ hears from master/booth/headphone outputs to avoid hearing double beats.

There was a problem hiding this comment.

Whether the user is listening to the scratched signal in headphones, booth, or master output is irrelevant.

Yes, and in no case an artificial input delay is helpful. If you delay the headphones to match the master distance, you remove the double base, beatmatching becomes harder, because of the extra delay. If you cannot afford this extra latency, you can ditch the master distance compensation and isolate the master sound from the users ears.

| static_cast<int>(m_pTalkoverMixMode->get())); | ||

| // These latency values are in milliseconds as required by EngineDelay. | ||

| double inputLatencyCompensation; | ||

| double measuredRoundTripLatency = m_pRoundTripLatency->get(); |

There was a problem hiding this comment.

I do not like this CO, because it can be only a rough assumption. And it does not take the time into account the input monitor needs, or in case of mic the possible extra hardware delay in a pramp or such.

The idea to help the user by such a value is not bad, but I would move the logic to the preferences as a kind wizzard dialog. Here in the engine it is more important to be able to set directly the final "inputLatency"

There was a problem hiding this comment.

I do not like this CO, because it can be only a rough assumption.

Yes, it is a rough assumption, but it is better than nothing. Being a few milliseconds off beat is much better than being 10s of millseconds off beat. It should be as good as it can by default. I think ASIO and JACK have ways to report the actual input latency to applications, but I do not know if that information is exposed by PortAudio (I do not know if CoreAudio reports that information).

And it does not take the time into account the input monitor needs, or in case of mic the possible extra hardware delay in a pramp or such.

What do you mean by input monitor needs? Do you mean a booth delay? That is a separate use case independent from latency compensation for the master/booth mix relative to mic inputs.

The idea to help the user by such a value is not bad, but I would move the logic to the preferences as a kind wizzard dialog. Here in the engine it is more important to be able to set directly the final "inputLatency"

I disagree. This is way too complicated to be exposed to users and users do not need to understand it. All they need to do is measure the real roundtrip latency with a loopback cable and Mixxx should do the math correctly.

There was a problem hiding this comment.

I disagree. This is way too complicated to be exposed to users and users do not need to understand it. All they need to do is measure the real roundtrip latency with a loopback cable and Mixxx should do the math correctly.

Yes, Mixxx should do this, but not in a CO interface hiding the truth. It should calculate the pure delay values in a preferences pane and not in the engine. That is my point.

There was a problem hiding this comment.

Oh, yes. I agree that this logic should be removed from the engine. It is wasteful to continually calculate it in each engine callback, but it was easier to implement this way. I will move it before merging this.

| m_pInputLatencyCompensationDelay = new EngineDelay(group, | ||

| ConfigKey(group, "inputLatency")); | ||

| m_pInputLatencyCompensationHeadphonesDelay = new EngineDelay(group, | ||

| ConfigKey(group, "headMixDelay")); |

There was a problem hiding this comment.

I think we do not need this, because we have already a "headDelay"

There was a problem hiding this comment.

We do need this because the headphones need to have the same latency compensation applied as the master mix. Otherwise the headphones and master mix will be out of time even if the DJ is positioned very close to the master speakers. A separate EngineDelay instance is required for every delayed buffer because each EngineDelay keeps its own internal buffer. I can add a comment here explaining that.

There was a problem hiding this comment.

We have two use cases:

-

Recording: In this case, the user does not hear the master and the talk over channel. It is a good idea not to have any delay in the headphones to keep a low latency setup.

-

Dancefloor: The user hears the master. In this case the latency compensation does not work. The user will hear a double base. In this case a speaker distance compensation makes sense. This is the opposite delay setup compared to 1.

The first can also used if you have a closed booth or closed headphones, to ban the master sound form the users ears.

There was a problem hiding this comment.

Recording: In this case, the user does not hear the master and the talk over channel.

Huh? Why would the user not want to hear these?

It is a good idea not to have any delay in the headphones to keep a low latency setup.

m_pInputLatencyCompensationHeadphonesDelay only delays the PFL mix going to the headphones. The talkover mix going to the headphones is not delayed.

Dancefloor: The user hears the master. In this case the latency compensation does not work. The user will hear a double base. In this case a speaker distance compensation makes sense. This is the opposite delay setup compared to 1.

I think you are confusing the latency compensation with the delays compensating for speaker placement. They are separate issues and the delays need to remain separate.

There was a problem hiding this comment.

Recording: In this case, the user does not hear the master and the talk over channel.

Huh?

Why would the user not want to hear these?

Because he would hear his own voice twice. First direct air feedback, second the delayed signal thou the head phone. You cannot speak good under that circumstances.

m_pInputLatencyCompensationHeadphonesDelay only delays the PFL mix going to the headphones. The talkover mix going to the headphones is not delayed.

The use case is only relevant, if the speaking person is not the headphone listening person. I cannot think how this will work.

So for now: headphone, no talkover.

I think you are confusing the latency compensation with the delays compensating for speaker placement. They are separate issues and the delays need to remain separate.

That's my point. Separate issues. I just wanted to point out, that you cannot do both at the same time, because it is just the opposite.

There was a problem hiding this comment.

If the user does not wish to hear their voice with a delay, they can either listen to the booth output excluding the talkover mix or use direct monitoring. I suspect that the real reason users have complained about hearing their voice in the master output delayed is not because it is delayed, but because it has been mixed out of time without latency compensation and users have not understood what the problem was.

There was a problem hiding this comment.

The user wishes to have no talkover on headphones at any position of the headphone mix knob. That is a requirement.

This was possible in Mixxx 2.0 and schould be still possible.

The real reason is that the user does not want to hear his voice twice. "Echo cancelation"

There was a problem hiding this comment.

That was only possible in Mixxx 2.0 by excluding the talkover mix from the master mix. Take another look at the code. This PR does not change that. If the user wishes to hear the master mix in headphones but not hear the talkover mix, they could select the direct monitor (record/broadcast only) talkover mix mode. Or they could use the master only mode and listen to the booth output with headphones.

There was a problem hiding this comment.

Yes right, but the user does not have the benefit of the additional booth output. If we Mixxx booth into headphones, we can freely mix talkover to sidechain only, sidechain + master, sidechain + master + booth. Only in the later case he has the talkover mix on his headphone. Sidechain + master is a reasonable default.

I consider booth and the master part of the headphone, to be the same. Both is intended to monitor the output. Both schould normally not contain talkover. All use-cases for one apply also for the second.

Did you consider my drawing? Do you understand it?

| m_pKeylockEngine->set(pConfig->getValueString( | ||

| ConfigKey(group, "keylock_engine")).toDouble()); | ||

|

|

||

| // TODO: Make this read only but let EngineMasterTest enable it |

There was a problem hiding this comment.

This comment is wrong, because the user can enable the master channel even if there is no master connected.

There was a problem hiding this comment.

Why? What is the use case for that?

There was a problem hiding this comment.

Because the master mixxx goes to recording and broadcasting as well. In Mixxx 2.0 the user can choose if he needs the master mix and enable or disable it manually on demand.

If a user feets the deck outputs to an external mixer and has no recording or broadcasting, he can disable the master mix.

There was a problem hiding this comment.

There is no need for the user to be concerned with this. Mixxx should detect when processing the master mix is necessary and disable it automatically if it is not needed.

There was a problem hiding this comment.

Yes, that would be nice. I thought about it when I have introduced this preference option. But it was too hard to find clear indicators if we need the master mix or not. This option was a workaround.

I think we should keep this workaround working in this PR for now.

There was a problem hiding this comment.

I agree, which is why I left it as a TODO comment.

There was a problem hiding this comment.

Than the TODO needs to be rewritten:

// TODO: Make this read only and let the Engine decide if it needs a master mix or not.

| m_pBooth = SampleUtil::alloc(MAX_BUFFER_LEN); | ||

| m_pTalkover = SampleUtil::alloc(MAX_BUFFER_LEN); | ||

| m_pTalkoverHeadphones = SampleUtil::alloc(MAX_BUFFER_LEN); | ||

| m_pSidechain = SampleUtil::alloc(MAX_BUFFER_LEN); |

There was a problem hiding this comment.

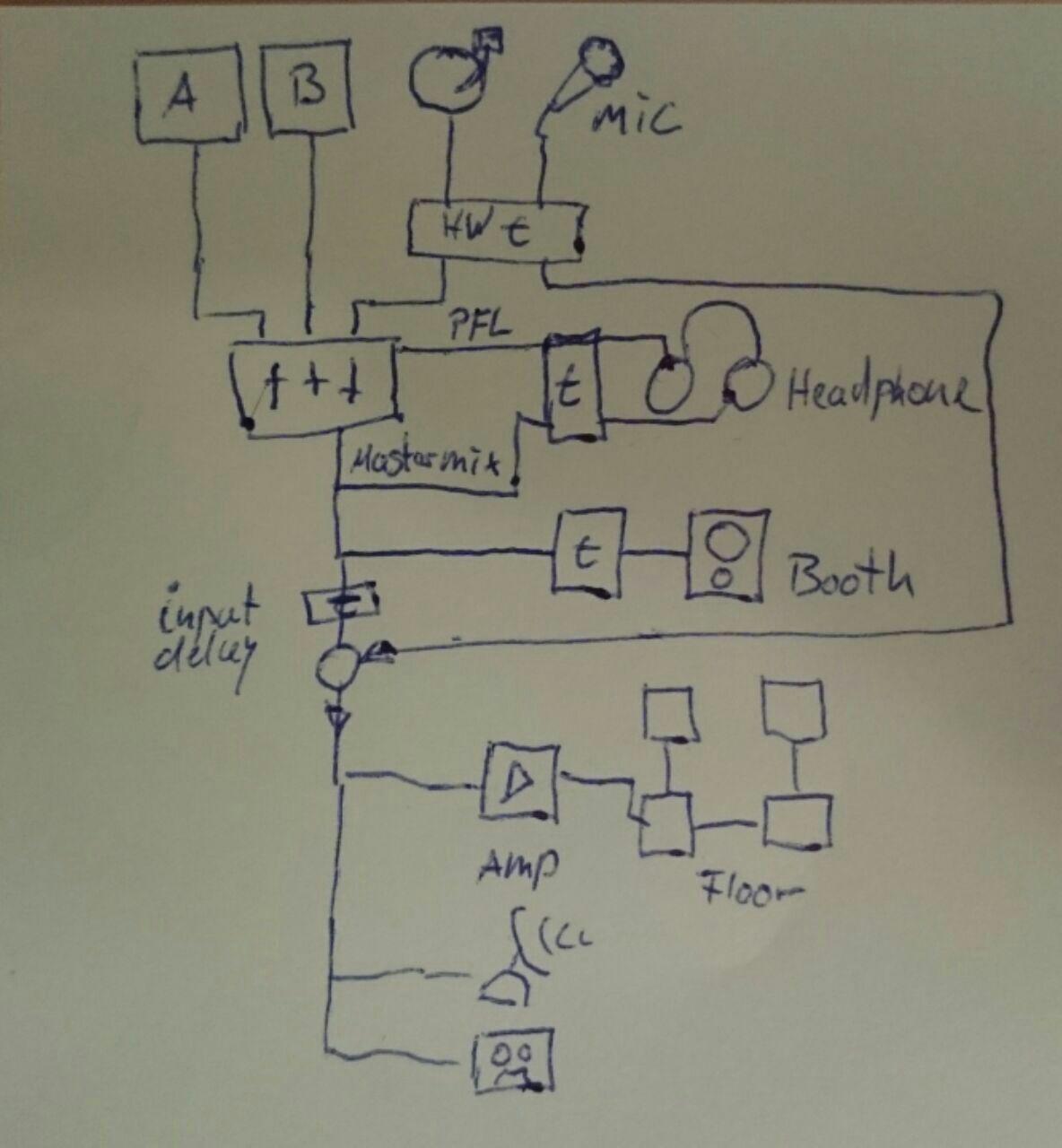

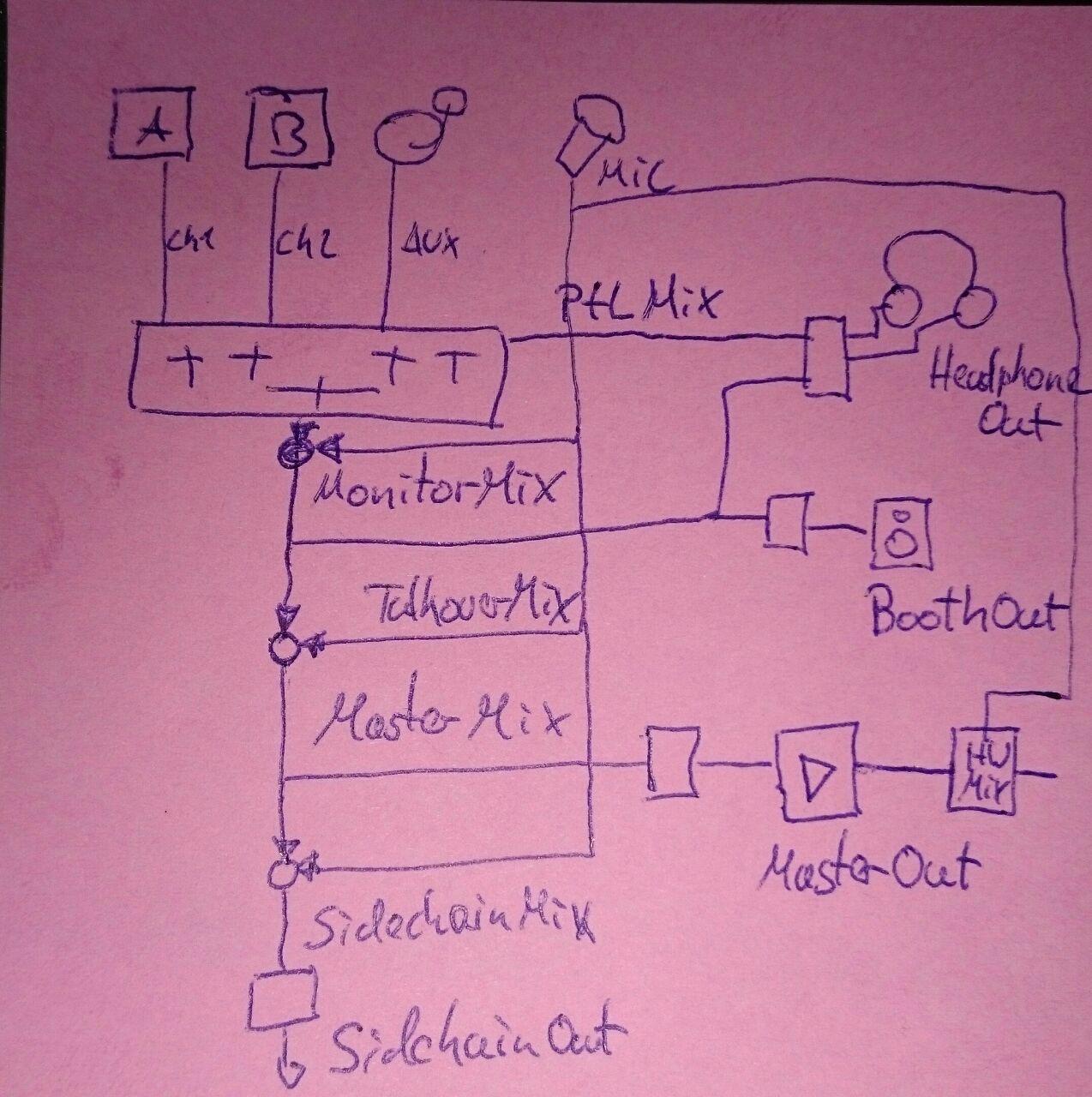

According to my "beautiful" drawing, we three buffers to hold the output:

m_pHead, pMaster and pBooth. We may need a MasterMixxx buffer as well, but I think we can reuse pBooth for this.

pMaster can be used for mixing the talkover Mixxx first.

| SampleUtil::clear(m_pBooth, MAX_BUFFER_LEN); | ||

| SampleUtil::clear(m_pTalkover, MAX_BUFFER_LEN); | ||

| SampleUtil::clear(m_pTalkoverHeadphones, MAX_BUFFER_LEN); | ||

| SampleUtil::clear(m_pSidechain, MAX_BUFFER_LEN); |

There was a problem hiding this comment.

All these clears should be redundant.

| // Mix the talkover mix with the master output, but exclude it from | ||

| // the booth output. | ||

| if (m_bRampingGain) { | ||

| SampleUtil::copy1WithRampingGain(m_pBooth, m_pMaster, |

There was a problem hiding this comment.

It seems to be more efficient to compose the master mixxx in the booth buffer.

In this case we have an in place gain change here.

| m_pMaster, 1.0, | ||

| m_pTalkover, 1.0, | ||

| iBufferSize); | ||

| } else if (configuredTalkoverMixMode == TalkoverMixMode::MASTER_AND_BOOTH) { |

There was a problem hiding this comment.

MASTER_AND_BOOTH, I think the booth signal should be used to Mix into headphones as well, is this already the case? Is the name her still correct? We may consider to switch to an abstract name NORMAL and DONT_HEAR_YOURSELVES or something and explain the details in the doc.

There was a problem hiding this comment.

Mics are only mixed into headphones if PFL is activated for that mic input or the head mix knob lets some of the master signal into the headphones.

There was a problem hiding this comment.

.. or the head mix knob lets some of the master signal into the headphones.

This should not happen with the head mix knob. It should only mix in the master mix without talkover as it is currently the case in Mixxx 2.0

There was a problem hiding this comment.

That is not currently the case. The talkover mix is mixed into the master mix before the master mix is mixed with PFL for the headphone output. This PR does not change that. I do not think that is a problem.

There was a problem hiding this comment.

This is a problem. The user don't like to her his own voice with a delay in headphones. This was the original problem we have solved Mixxx with the talkover mix.

| // because it is being mixed in hardware without the latency of sending | ||

| // the signal in to Mixxx for processing. | ||

| // The talkover mix will be mixed later with recording/broadcasting signal | ||

| // after latency compensation. |

There was a problem hiding this comment.

Refer to the signal path diagram on the Launchpad bug

There was a problem hiding this comment.

Mixxx output (no mic mixed in) ---------- USB ---------- digital-to-analog converter

\

\

microphone ---------- mic preamp -------------------------------- sound card output

\ sound card direct monitoring

\

analog-to-digital converter ---------- USB ---------- Mixxx input ---------- Mixxx recording

/

/

Mixxx output (no mic mixed in)

I think your picture is missing the Booth / Master distinguishing, because you have two times "Mixxx output (no mic mixed in)" on different time domains.

If you connect the booth output and the mic preamp in my picture to the hardware mixer, it should work without any issues, right?

There was a problem hiding this comment.

As mentioned in another comment, the master mix going to the master, booth, and headphone outputs all need the same delay for input latency compensation or they will be out of time with each other. Delays for each output to compensate for speaker placement is a separate issue.

If you connect the booth output and the mic preamp in my picture to the hardware mixer, it should work without any issues, right?

I am confused about your picture. What is hardware mixer, where is the sound card, and where is Mixxx's processing?

There was a problem hiding this comment.

Ah OK, Now I see. Unfortunately it does not work, because the master buffer already contains the talkover signal. You need to delay the master signal before mixing the talkover signal in .

These confusions is the reason why I have original suggested to use internal buffers and only link the external buffers to it. This would make the code easy to read.

We just have to find nice names for the internal buffers.

For example pMixerOut and pTalkover and pMasterMix,

In this case (direct monitoring):

pMasterMix = delay(pMixerOut) + pTakover

pMixerOut -> pMaster

pMixerOut -> pBooth

pMasterMix -> pSidechain

master and booth:

pMasterMix = pMixerOut + pTakover

pMixerMix -> pMaster

pMixerMix -> pBooth

pMasterMix -> pSidechain

master only (closed headphones)

pMasterMix = delay(pMixerOut) + pTakover

pMixerMix -> pMaster

pMixerOut -> pBooth

pMasterMix -> pSidechain

There was a problem hiding this comment.

Unfortunately it does not work, because the master buffer already contains the talkover signal.

You need to delay the master signal before mixing the talkover signal in .

That is what is done.

For example pMixerOut and pTalkover and pMasterMix,

What is pMixerOut in your examples?

There was a problem hiding this comment.

I would like to minimize renaming variables to minimize issues merging this with #1254.

There was a problem hiding this comment.

It is not just renaming variables. It is a new way of operating. First create the required buffer content at the required time domain and then. Pass these buffers to the outputs a configured in the talkoververFreeMixx mode.

pMixerOut is the output after crossfader, before adding the takover channel: talkoververFreeMixx

|

|

||

| if (configuredTalkoverMixMode != TalkoverMixMode::DIRECT_MONITOR | ||

| && m_pNumMicsConfigured->get() > 0) { | ||

| m_pInputLatencyCompensationHeadphonesDelay->process(m_pHead, iBufferSize); |

There was a problem hiding this comment.

Oh whoops, this is double processed. I had fixed this when merging this branch into my personal branch so didn't notice it before.

| // signal needs to be delayed, but the master/booth outputs must not be | ||

| // delayed. | ||

| SampleUtil::copy(m_pSidechain, m_pMaster, iBufferSize); | ||

| m_pInputLatencyCompensationDelay->process(m_pSidechain, iBufferSize); |

There was a problem hiding this comment.

The talkover mix should be mixed into m_pSidechain here. I suspect this was another confusion switching betwen Git branches while I was developing this.

I somehow forgot to add this in a previous commit.

|

I think one source of confusion is that you have split the master to master and booth. So we have got the internal namespace master, which is responsible for master and booth and some other master which does not effect booth. |

|

That is an interesting idea. What exactly do you propose for names? I think the buffer being used for the Master output configured by the user in the preferences must retain the word "Master" in it or it will be confusing to read the code. I do not think referring to an amplifier would be helpful because the Master output could be connected to a hardware mixer or other signal processing equipment like a limiter before going to the amplifier. m_pMasterMix and m_ppMasterOutput would hardly be an improvement. Maybe m_pInternalMix and m_ppMasterOutput? |

|

My original idea was to rename the master output to "amp" or "amplifier" but that is probably a bad idea since the user is used to the name master. Here a proposal: Internal buffers have the "Mix" prefix external buffers / or pointers to buffers have the "Out" prefix. I will draw a new picture ... |

|

Looking on this picture, I realize, that we can simplify it even more if we add only the talkover mix in point only: MonitorMix + TalkoverMix = MasterMix According to the TaloverMixMode we can assign the outputs: |

Good point, setting it to the minimum doesn't really fix the problem or even come close in most cases. So, I have redesigned the UX for the new settings. The spinbox has been relabeled to "Microphone Latency Compensation" and is only enabled when the direct monitor mode is selected. When the user clicks the Apply button, if any mics are configured and the direct monitor mode is selected but the spinbox is 0, an advisory dialog pops up telling the user to measure their round trip latency. If any mics are configured and the direct monitor mode is selected and the user is changing the configured latency, a different dialog box advising them to remeasure their round trip latency pops up, but it does not forcibly change the spinbox anymore. I have changed it so the direct monitor mode will be selected when upgrading from Mixxx 2.0 if the user had previously configured the old "Recording/Broadcasting Only" setting. Users who were using that before will see the new advisory dialog telling them to measure their round trip latency, so the feature will be easily discoverable for them. |

| QMessageBox measureRoundTripLatencyAdvisoryBox; | ||

| measureRoundTripLatencyAdvisoryBox.setIcon(QMessageBox::Information); | ||

| measureRoundTripLatencyAdvisoryBox.setText(tr("Measure Round Trip Latency")); | ||

| measureRoundTripLatencyAdvisoryBox.setInformativeText(tr("Your microphone input will be out of time in your recorded and broadcasted mixes compared to what you hear. To align them, measure the round trip latency through your setup and enter this value for the Microphone Latency Compensation. Refer to the Mixxx Manual for details.")); |

There was a problem hiding this comment.

This text is IMHO to long.

How about:

"Recommended:"

"Measure your round trip latency and enter it as Microphone Latency Compensation"

"Details: Mixxx Manual (hyperlink)"

There was a problem hiding this comment.

We have also the issue, that is appears after pressing OK. After that the preferences dialog is closed, so the "Microphone Latency Compensation" field is out of sight. It may also happen, that the user has already entered a different context and he might be confused why this pop up appears just now.

I am not sure what is the best solution. Show the pop up after the microphone page is populated? Or show the field after you change back to the output pane. Or stay change "OK" to "Apply" in case the box is shown, Or add an inline hint in the output tab. ...

Ideas?

There was a problem hiding this comment.

The text is somewhat long, but I don't think any of the alternatives you proposed would work well. It would be confusing to tell users to refer to the manual without telling them why. I doubt many users would read the manual if they didn't understand what the problem was.

There was a problem hiding this comment.

We have also the issue, that is appears after pressing OK.

No, it appears after pressing Apply. The preferences window is still open after that. Showing it at any earlier time would be annoying and get in the way of choosing settings.

There was a problem hiding this comment.

I do not think it makes sense that there are both OK and Apply buttons in the Preferences window. But I don't think we need to deal with that in this PR.

There was a problem hiding this comment.

Try it out, it appears after Ok. After pressing OK in the pop-up, I get an hourglass and after two seconds the preferences window vanishes.

For the pop up text, the pure text can as long as you like an even longer as long as the most significant is stated at the beginning and an experienced user can stop reading.

There was a problem hiding this comment.

Pressing the "Ok" button and the "Apply" button both call DlgPreferencePage::slotApply, so there is no way for DlgPrefSound to distinguish between them. If the user has changed settings and presses either button, the dialog will show if they have direct monitor mode configured, a microphone configured, and the Microphone Latency Compensation at 0.

It may also happen, that the user has already entered a different context and he might be confused why this pop up appears just now.

When the Ok button was pressed, preferences changed on any page were applied before the window closed. I have changed it to be consistent with the Apply button. The Ok button only applies settings changed on the current page now. This is not really the correct solution though. The correct solution is to ask the user if they want to apply or cancel changed settings when switching from one preference page to another, not just silently discard changes from other pages. But fixing that is beyond the scope of this PR.

There was a problem hiding this comment.

The Ok button only applies settings changed on the current page now.

I'm not sure we should keep this change... I'm thinking about reverting it. Popping up a dialog that could be confusing because it is shown out of context is not so great, but silently not applying changes from other preference pages is bad.

There was a problem hiding this comment.

Yes, right, we should keep the old behavior fro now.

| CSAMPLE boothGain = m_pBoothGain->get(); | ||

| if (m_bRampingGain) { | ||

| SampleUtil::copy1WithRampingGain(m_pBooth, m_pMaster, | ||

| m_boothGainOld, boothGain, iBufferSize); |

There was a problem hiding this comment.

I think we should add booth to headphone:

- Balance is a PA system compensation parameter and need not to be in the headphones.

- Microphone setting "Master output only" is used to remove the talkover mix from the user. I think it is natural to remove it from headphones as well.

There was a problem hiding this comment.

Again, I have already fixed the balance issue in #1254.

Regarding mics in the "master" side of the head mix knob, I have looked at the block diagrams of some hardware mixers like the Pioneer DJM-900 NXS and Allen & Heath Xone:92. Some do it as you are proposing, some do it with mics always mixed in. I think it would make sense to add an option for it, but my concern is making the label for it clear and concise. "Monitor Microphones In Headphones With Right Side Of Cue Mix Knob" is a lot of words that I think few users will care about.

There was a problem hiding this comment.

I think it's fine to leave the headphones as they are in this PR. I don't want to create any more complications when merging this with #1254. The more merge conflicts are created, the more likely it is that regressions will occur when resolving them.

There was a problem hiding this comment.

I do not think we can compare us 1:1 to hardware Mixers. They should have a very low round-trip latency. So the do not have the issue of an annoying echo in the headphones. They have the option to remove the microphones from the booth mainly to avoid a feedback loop.

This cannot happen using Headphones, so there is no strong need to remove the sound from there.

For me it is sufficient to just pass booth to the Headphone Mixxx.

If you think we need an option for this we can add this as an option to Mic Monitor Mode

- Master output only

- Master and headphone (master cue)

- Master, booth and headphone (master cue)

- Direct monitor (recording and broadcasting only)

There was a problem hiding this comment.

I am reluctant to add any more options or make the current set of options affect the headphones when there is no reason for a user to think that they would affect the headphones at all. What is the use case for removing the mics from the right side of the headMix knob? If it is to avoid latency, that is not a good solution. The solution in that case is to use direct monitoring.

There was a problem hiding this comment.

The use case is, if the user does not have a hardware with direct monitor feature.

I do not think that this kind of Mic is uncommon, but If you think the whole use case for it is uncommon, we can skip this feature for now.

There was a problem hiding this comment.

AFAIK there are only two common cases in which direct monitoring is not available: USB microphones and onboard sound cards. There has been a warning on the wiki to avoid USB microphones for a little while, but I will elaborate on this for the 2.1 manual. Then that use case should not be much of an issue (of course there will always be users who buy hardware first then check if it is supported or buy hardware then decide to use Mixxx later). As for onboard sound cards, users are probably not in a loud environment with such a setup and using the direct monitoring mode to disable software monitoring and relying just on monitoring through the air/bone conduction should be good enough.

|

I have just tested this again and I still really dislike the pop up box. I think a better solution would be to highlight the Latency Compensation Box somehow, in the cases you now show the pop up and move the text into a tool-tip. |

|

Can you clarify which popup box you are referring to? The one that appears when the latency compensation is 0 or when changing the configured processing latency? Or both?

How could it be highlighted? Changing the color? That may not be a great idea because the preferences dialog uses the system Qt theme, so specifying a color might not work well with the user's theme. |

|

I mean both boxes. |

|

Okay that seems like a good idea. I'll work on it. |

|

Thanks! |

|

What do you think of the revised UX? Ready for merge? |

|

wonderful, just testing |

|

It works and looks good to me. |

|

Thank you for the extensive review! I am glad we finally have a good solution for all the users who have been confused about broadcasting setups. And that I can record my flute in time with the music :) I will work on revising the manual and merging this into #1254. |

|

This PR has surpassed #1187 for the most comments on a Mixxx PR. :P |

esbrandt

left a comment

esbrandt

left a comment

There was a problem hiding this comment.

Sorry for post merge comments - just found about some changes while updating the translation template

| <widget class="QLabel" name="latencyCompensationWarningLabel"> | ||

| <property name="text"> | ||

| <string>Microphone/Talkover Mix</string> | ||

| <string>warning goes here</string> |

There was a problem hiding this comment.

Placeholder string should not show up in the translation template.

| <widget class="QLabel" name="sampleRateLabel"> | ||

| <property name="text"> | ||

| <string>Headphone Delay</string> | ||

| <string>Sa&mple Rate</string> |

There was a problem hiding this comment.

Why start using ampersands (&) here - for quickly access when holding the Accelerator key?

We do this nowhere else in the preferences dialog, only in the menu bar on top of the application window.

There was a problem hiding this comment.

I don't know why, I just copied the text that was there before. We could remove them.

| <widget class="QLabel" name="keylockLabel"> | ||

| <property name="text"> | ||

| <string>Sample Rate</string> | ||

| <string>&Keylock/Pitch-Bending Engine</string> |

| </item> | ||

| <widget class="QLabel" name="latencyLabel"> | ||

| <property name="text"> | ||

| <string>System Reported &Latency</string> |

There was a problem hiding this comment.

ampersands (&) again - with nothing to select

| <item row="7" column="0"> | ||

| <widget class="QLabel" name="underflowLabel"> | ||

| <property name="text"> | ||

| <string>Buffer &Underflow Count</string> |

There was a problem hiding this comment.

ampersands (&) again - with nothing to select

This replaces the option to exclude the microphone inputs from the master output. That was sufficient for broadcasting users, but there was no way to have a master output with the mics and a booth monitor signal

without the mics for live use. Previously that required plugging a mic into an external hardware mixer or a DJ controller with a mic input hard wired to the master output only, but with this PR it is possible to do with Mixxx and any sound card with enough output channels. The broadcasting use case is still supported by connecting the speakers to Mixxx's booth output, which excludes the mics by default. This is more aligned with how hardware mixers work than the unconventional "Master output/Recording and Broadcasting only" option that was not self-explanatory.

As a nice benefit over using a hardware mixer for this, Mixxx's effects could be used on the mic signal, although the effects would be applied to both the master and booth outputs. Perhaps for Aris' GSoC project he could add a special effect unit that applies effects to the mic signals going to the booth output but not the master output. It would be useful to use the graphic EQ effect in that effect unit to prevent a frequency from feeding back without changing the sound of the master output.

If the microphone user(s) want to hear themselves, the mics can optionally be mixed into the booth output. In this case it is just a more convenient way to duplicate the master output with an independent volume control on the sound card without having to split the master output signal at the OS level, with a splitter cable, or with a hardware mixer.